Beyond Microservices: Choosing the Right Architecture Pattern for Your Next AI-Native App

TL;DR: Traditional microservices are no longer the default for high-scale AI applications. To build truly AI-native systems in 2026, CTOs must look toward agentic patterns, data mesh architectures, and stateful serverless compute. This post breaks down the transition from request-response models to autonomous, inference-heavy workflows that define modern Deep Engineering.

---

For the last decade, the "Golden Path" for any custom software development company was clear: break the monolith, implement microservices, and orchestrate with Kubernetes. It was a reliable formula for scalability and deployment independence.

But the landscape has shifted. We are no longer building apps that simply store and retrieve data; we are building systems that reason, act, and evolve. As we move deeper into 2026, the industry has realized that traditional microservices often introduce unnecessary latency and complexity when handling the heavy compute and data requirements of AI.

At Netling Digital, we believe that the next generation of software requires a "Deep Engineering" approach: one that integrates intelligence into the very fabric of the architecture, rather than treating it as a third-party plugin.

The Death of the "AI-as-a-Service" Wrapper

In the early 2020s, "AI integration" usually meant a REST call to a general-purpose Large Language Model (LLM). Today, that approach is dead. Why traditional web development is dead isn't just a provocative headline; it’s a technical reality.

Traditional architectures are optimized for I/O-bound tasks. AI-native apps, however, are inference-bound and context-heavy. When your application logic depends on multi-step reasoning (Agents), a standard microservice pattern often leads to "Distributed Monolith" syndrome, where services are too tightly coupled by the shared context they need to function.

1. The Agentic Architecture Pattern

The most significant shift in software architecture patterns is the move from "Co-pilot" (human-led) to "Agentic" (autonomous) systems. An agentic architecture isn't just a collection of services; it’s a network of autonomous entities capable of reasoning, using tools, and correcting their own errors.

Key Components of Agentic Systems:

- Orchestration Layer: Unlike a standard API gateway, this layer manages the "thought process" of the agents, handling loops and state transitions.

- Tool-Use Interfaces: Cleanly defined interfaces that allow AI agents to interact with legacy databases or external APIs securely.

- Human-in-the-Loop (HITL) Triggers: Architectural "checkpoints" where high-stakes decisions are paused for human verification.

Visualizing the Agentic Workflow: Beyond linear request-response to iterative reasoning loops.

2. Moving to a Data Mesh Architecture

In an AI-native world, data is not just a byproduct; it is the primary fuel. The traditional centralized data warehouse creates a bottleneck for real-time inference. This is where Data Mesh comes in.

Instead of one massive data lake, Data Mesh treats data as a product owned by specific domain teams. For a CTO, this means your "Customer Service AI" and your "Inventory Forecasting AI" manage their own specialized data pipelines. This decentralization ensures that the data used for Retrieval-Augmented Generation (RAG) is always fresh, accurate, and contextually relevant to the specific task.

3. Stateful Serverless and Edge Convergence

Latency is the enemy of a great user experience. In 2026, waiting 3 seconds for an LLM response is unacceptable. AI-native apps are moving toward Stateful Serverless and Edge Inference.

By leveraging durable functions and managed stateful queues, we can maintain the context of an AI conversation without the overhead of managing dedicated virtual machines. Furthermore, pushing the inference layer to the edge: running smaller, specialized models (DSLMs) directly in the CDN: reduces latency to milliseconds.

This is a core part of our AI-native MVP approach, where we prioritize speed-to-inference from day one.

The "Deep Engineering" Difference

At Netling Digital, we don't just "build apps." We practice Deep Engineering. This means we look beyond the UI/UX to the non-functional requirements that make or break a system at scale:

- Cost-Efficiency First: We architect systems to use the most efficient model for the task: using a $0.01 DSLM for classification instead of a $0.10 general LLM.

- Observability & Auditability: AI decisions shouldn't be a black box. Our architectures include comprehensive trace logging for every agentic decision.

- Graceful Degradation: If an AI service is down or a model hallucinates, the system must have a deterministic fallback.

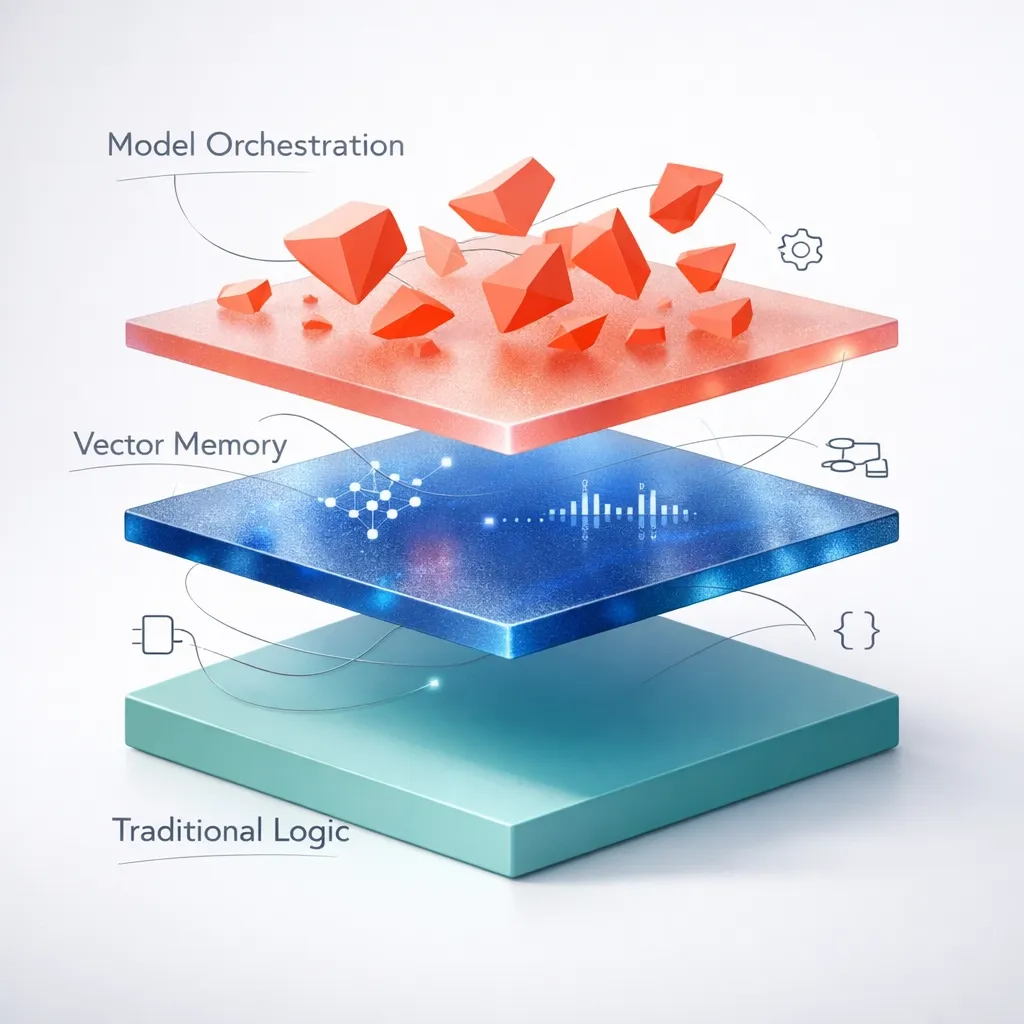

Deep Engineering Architecture: Balancing model orchestration, vector memory, and traditional logic.

Choosing the Right Pattern for Your Business

Not every project needs a full agentic mesh. Choosing the right software architecture patterns depends on your specific growth stage and technical debt.

Strategic Guidance for VPs and CTOs

When you are leading an engineering organization, the pressure to "do AI" is immense. However, the risk of building on the wrong foundation is even higher. Rigid architectures lead to "AI Debt": a situation where your models are smart, but your system is too brittle to update them.

As a custom software development company, Netling Digital specializes in helping technical leaders navigate these choices. We focus on our approach of meticulous craft and engineering rigor to ensure that what we ship today remains scalable three years from now.

No Surprises, Just Scale

We understand the fear of hidden overhead and rigid structures. Our methodology is built on transparency. We don't hide behind jargon; we provide clear, deterministic roadmaps for complex builds. Whether you are scaling a startup or transforming an enterprise, the goal is the same: stable, fast, and intelligent software.

Ready to Architect for the Future?

The transition from microservices to AI-native patterns isn't just a trend: it's a fundamental re-engineering of how software functions. If you're looking for a partner who understands the "Deep Engineering" required to build resilient, agentic systems, we should talk.

Ship smarter. Scale faster. Transform your stack.

- Explore our Services

- See Our Approach

- Contact us for a Strategic Consultation

More Articles

Continue reading from our engineering blog.

Complete Guide to MVP Development for Startups 2026

Building an MVP in 2026 isn't just about cutting features; it's about leveraging AI-native workflows and high-level engineering craft to ship products that validate and scale.

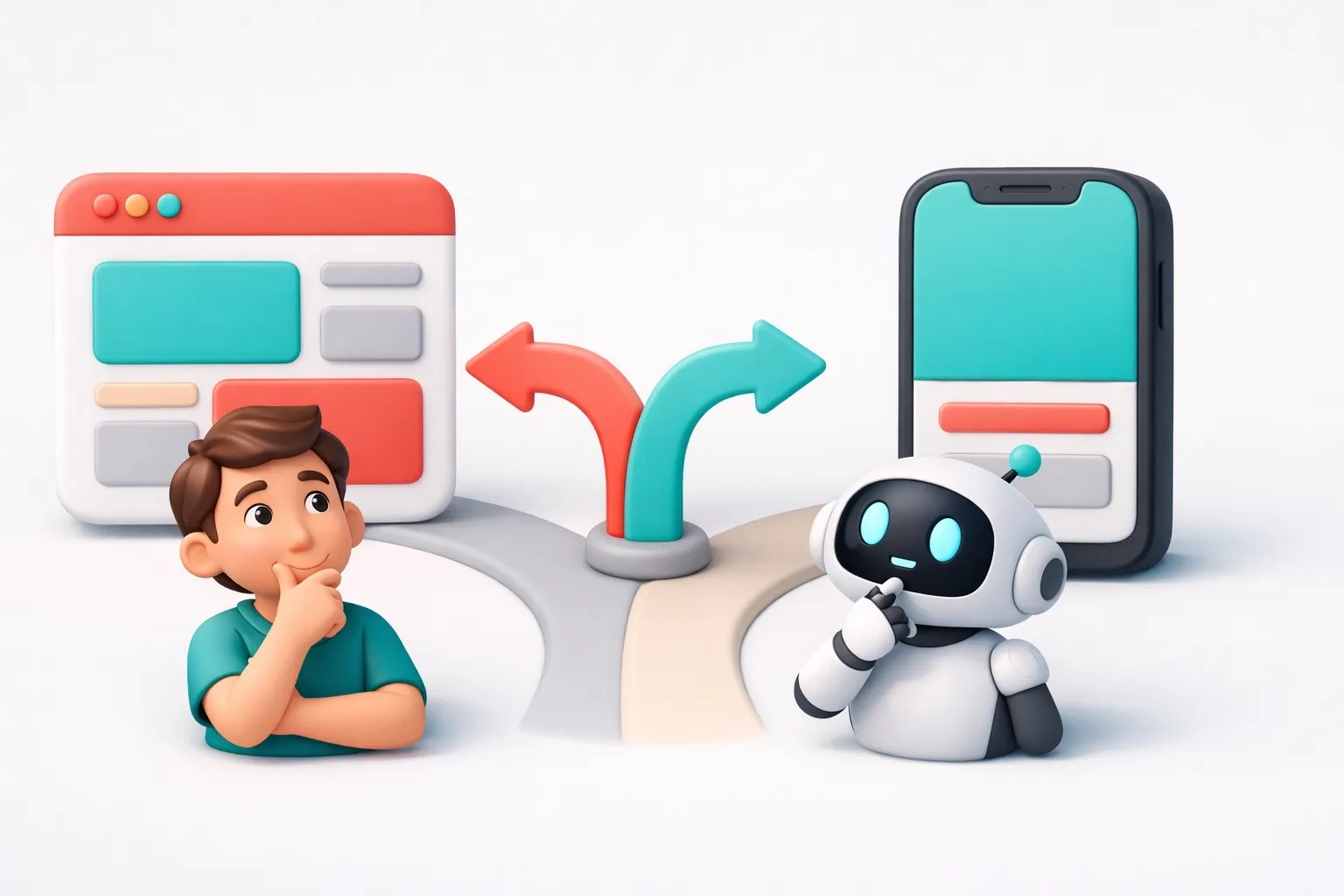

Web App vs Mobile App: Which Should You Build First?

Is the "Mobile First" mantra still relevant? We compare web apps vs. native mobile apps across cost, speed, and UX to help founders make the right strategic bet.

From Chaos to Clarity: A Masterclass in Requirement Elicitation for Complex Custom Software

Most software projects don't fail because of bad code; they fail because of misunderstood requirements. This masterclass breaks down the framework for turning chaotic visions into precise technical blueprints.